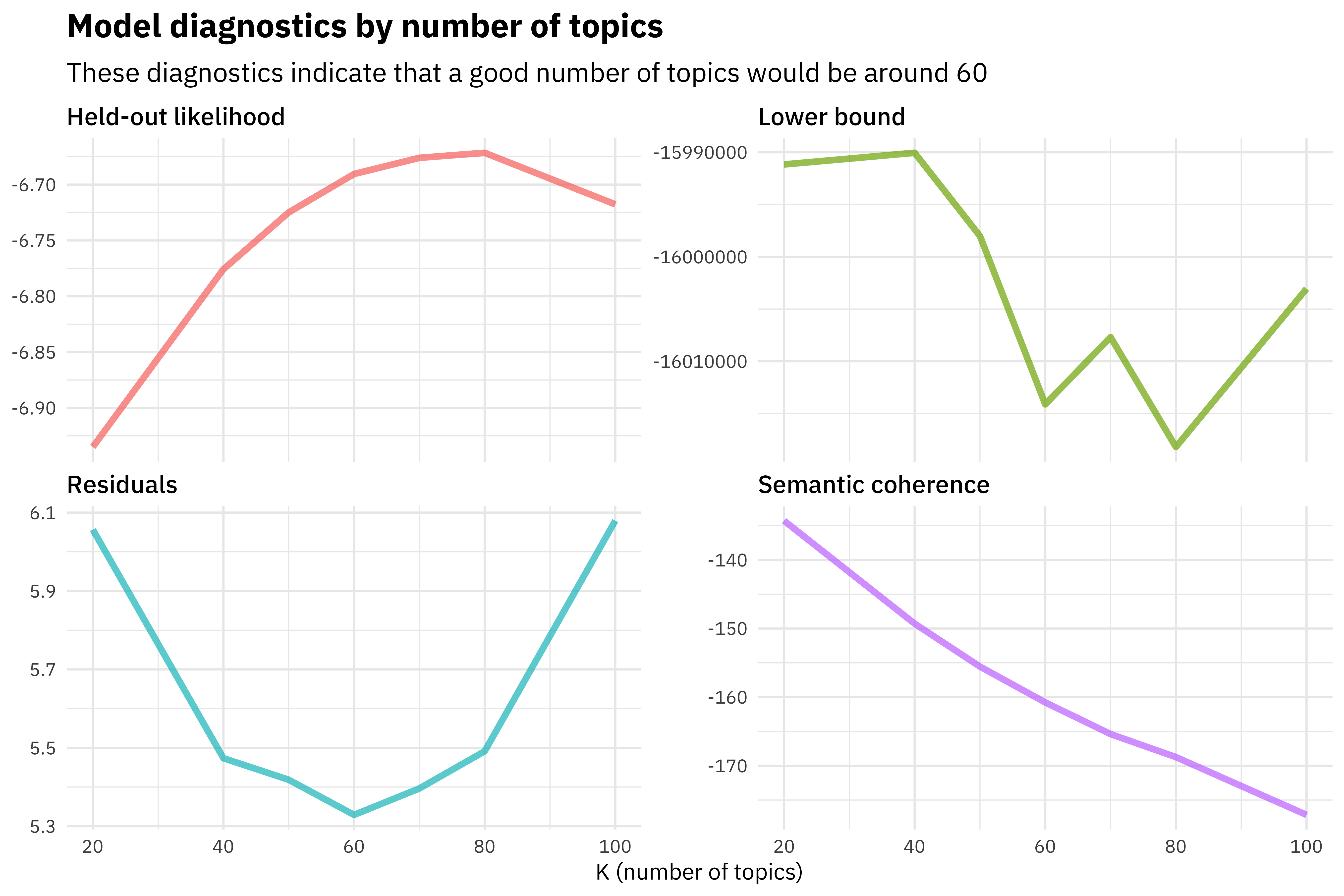

RecordIO-wrapped-protobuf (dense and sparse) and CSV fileįormats. LDA expects data to be provided on the train channel, and optionally supports a testĬhannel, which is scored by the final model. Input/Output Interface for the LDA Algorithm Than LDA and can scale better because NTM can run on CPU and GPU and can be parallelizedĪcross multiple GPU instances, whereas LDA only supports single-instance CPU training. Standpoint regarding hardware and compute power, SageMaker NTM hardware is more flexible There is a tradeoff between perplexity and topic coherence. Recent research byĪmazon, Dinget et al., 2018 has shown that NTM is promising for achieving high topicĬoherence but LDA trained with collapsed Gibbs sampling achieves better perplexity. Topic coherence, there is often a tradeoff with both LDA and NTM. While the objective is to train a topic model that minimizes perplexity and maximizes A promising model generates coherent topics or topics with high topic Of the pairwise word-similarity scores of the words in that topic e.g., Pointwise Mutual It is often defined as the average or median Each inferred topicįrom your model consists of words, and topic coherence is computed to the top N wordsįor that particular topic from your model. Likelihood computed per word often does not align to human judgement, and can beĮntirely non-correlated, thus topic coherence has been introduced. Score indicates better generalization performance. Inverse of the geometric mean per-word likelihood in your test data. Perplexity is an intrinsic language modeling evaluation metric that measures the To minimize perplexity and maximize topic coherence. Topic models are commonly used to produce topics from corpuses that (1) coherentlyĮncapsulate semantic meaning and (2) describe documents well. EC2 Instance Recommendation for the LDA AlgorithmĬhoosing between Latent Dirichlet Allocation (LDA) and Neural Topic Model (NTM).Input/Output Interface for the LDA Algorithm.Choosing between Latent Dirichlet Allocation (LDA) and Neural Topic Model (NTM).Meow, and bark, LDA might produce topics like the As an extremely simple example, given a set of documents where the only words that occur This allows LDA to discover these word groups and use them toįorm topics. You would expect these documents to more frequently use a shared subset of words, than when compared withĪ document from a different topic mixture. The exact content of two documents with similar topic mixtures will not be the same. The topics are learned asĪ probability distribution over the words that occur in each document. Since the method is unsupervised, the topics are not specified up front, andĪre not guaranteed to align with how a human may naturally categorize documents. Observation is a document, the features are the presence (or occurrence count) of each word, and theĬategories are the topics. To discover a user-specified number of topics shared by documents within a text corpus. The Amazon SageMaker Latent Dirichlet Allocation (LDA) algorithm is an unsupervised learning algorithm thatĪttempts to describe a set of observations as a mixture of distinct categories.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed